TERPECA horror selection bias analysis

Daniel Egnor, Oct 2025

Abstract

We investigated whether horror escape rooms get unfairly high rankings in TERPECA due to being mainly played by horror fans who rate them favorably. Using techniques borrowed from opinion polling, we reweighted votes to simulate what rankings might look like if everyone played everything, and found that actively scary horror rooms are indeed ranked very approximately 4 positions higher than they “should” be. Unfortunately, the correction method would have other consequences that are worse than the original bias. Our recommendation is to accept this small bias as a known limitation, and to continue labeling horror levels so people can account for their own preferences.

Background

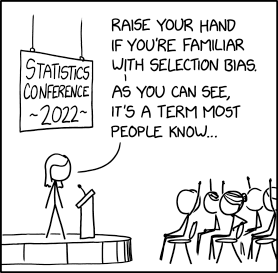

In the escape room community, there is a long-standing hypothesis that pairwise comparisons between horror and non-horror games are biased in favor of horror because horror games are mainly played by horror lovers, leading to an overall pro-horror bias in TERPECA results compared to a theoretical “ideal TERPECA” where every voter ranked every finalist.

In theory this effect could apply to any category people might preferentially play (action/adventure, narrative, etc), but horror is a uniquely divisive genre, and selection may be asymmetric if horror-loving voters enjoy other genres but horror-averse voters avoid even highly ranked horror games. For these reasons, this paper focuses on horror-type games and preferences.

IMPORTANT: The topic of horror and preference (and genre in general) in escape rooms (and media in general) is complex and sprawling: What is horror really? Why is it so divisive? How should voters think about varying preferences when ranking games? How should awards like TERPECA take varying preferences into account?

For the sanity of everyone concerned, this paper does NOT attempt to address any of those weighty questions, but ONLY attempts to estimate this one specific type of bias in TERPECA voting and ranking.

Readers are assumed to be familiar with the TERPECA nomination and voting process and the TERPECA ranking algorithm.

Methods

Established survey weighting techniques

The problem of selection bias in TERPECA resembles the well-studied problem of selection bias in public opinion surveys. In TERPECA, voter choice to play a game or not may be correlated with their preferences; in opinion surveys, individual choice to respond or not may be correlated with their opinions. Both cases seek to approximate a “perfect survey” with data from everyone in the target population.

Survey researchers often use weighting to compensate for selection bias. Survey weighting is a complex topic, but in practice surveys capture “normalization variables” (typically demographics such as age, gender identity, etc) as well as study variables (whatever the survey is measuring). Survey data points are weighted to bring the normalization variables in line with known population statistics, hoping that weighted study variables more closely approximate what whole-population values would be.

For example, if a target population has a known 48:48:4% male:female:nonbinary gender ratio, but survey responses have a 40:59:1% split, each data point might be assigned a weight of 1.2, 0.81, or 4.0 depending on reported gender (M/F/NB) to compensate for the overrepresentation/underrepresentation of each group in the survey.

However, survey response weighting must be used with care:

- Weighting only helps if the normalization variables cover all major sources of correlation between survey response and study variables. For example, a survey normalized only by gender could miss age-related selection bias.

- Normalizing multiple variables at once (eg. age and gender) is mathematically challenging, and standard multivariable weighting methods (“stratification”, “raking”, “matching”, etc) each have pros and cons.

- Weighting can magnify noise, especially if sparse data from undersurveyed groups is magnified (as for NB in the example above).

- Weighting requires population baseline statistics which are accurate, updated and comparable with survey results. Census data publication takes time, and variables like race and gender can be difficult to poll consistently.

- It is difficult to evaluate whether weighting actually improves survey accuracy.

Weighting for TERPECA horror bias analysis

IMPORTANT: This paper ONLY uses preference normalization as a tool to evaluate the existence of bias in TERPECA results. The authors are NOT proposing a weighting normalization approach to actually compute TERPECA ranking.

In the 2024 awards year, voters were asked their preference for horror on a 5-point scale (from “Strong dislike” to “Strong like”). We choose this stated preference for weighting instead of traditional demographic variables, for several reasons:

- Horror preference is central to the original bias hypothesis, and is independent of traditional demographics.

- TERPECA doesn’t survey traditional demographics.

- Normalizing a single variable is much simpler than multivariable normalization.

However, this choice of normalization variable has disadvantages:

- As of this writing, preference data is only available for the 2024 awards year.

- Self reported horror preference is subjective, and may have its own confounding bias as voters interpret the scale differently.

- Other variables can independently confound play choices and ratings, such as physical requirements or geographic location. Normalizing only by stated preference misses these factors.

To evaluate the bias hypothesis as proposed, preference normalization weighting should be applied separately to each game, and more specifically to each pairwise comparison between games.

Applying normalization to TERPECA ranking

Ideally, normalization would be applied to every pairwise comparison, the TERPECA algorithm could be re-run, and the final ranking of rooms compared with the official ranking.

Unfortunately, many pairs have few or no voters for some preference levels, making normalization difficult for those pairs, and the complexity of TERPECA ranking makes understanding the impact difficult.

Therefore, in this analysis we start by examining direct pairwise vote ratios, counting the number of voters who ranked one room above the other versus the number who ranked them in the other order.

We compute vote ratios with and without preference normalization weighting, focusing on pairs with ample voting data and differing horror levels. We then approximate how those changes could impact TERPECA results by characterizing the relationship between direct vote ratio and ranking placement. This process skips many subtleties of the ranking algorithm, but makes the analysis tractable.

Finally, we do run the full TERPECA algorithm with normalization, ignoring the missing-data problem, to see that the outcome is roughly similar to what is predicted by studying pairwise vote ratios.

Data

Population preference breakdown

First, we compute the ratio of horror preferences for all TERPECA voters in 2024 to use as a normalization target:

On our five-point survey scale, most TERPECA voters like horror, but all preferences are represented. Hover for details (desktop only).

Sanity checking stated preference and game ranking

To check that the preference survey results make sense, we compare how voters with different preferences rank different games:

This chart shows where any finalist (#17 Stay in the Dark by default) lands in voter ranking lists (relative to list size), grouped by horror preference. Circles show individual voters, and box plot markers show 0/25/50/75/100th percentiles. Hover for details (desktop only).

For reference, games in TERPECA are assigned a horror level indicated by an icon:

- ☀️ No horror: The game doesn’t have horror-type theming or scary situations

- 👻 Spooky but not scary: The game has horror-type theming, but doesn’t focus on scaring players

- 🔦 Passively scary: Regardless of theme, the game uses techniques to create anxiety, but doesn’t startle players much

- 😱 Actively scary: Jump scares, simulated attacks or sudden actions repeatedly or seriously startle or frighten players

As expected, voters who report liking horror generally rank “actively scary” games (like Stay in the Dark) higher. This correlation does not indicate selection bias, just that preferences correlate with rankings in the expected way.

(The correlation is weaker for games with the intermediate horror levels of “spooky” or “passively scary”.)

Normalizing votes by horror preference

Next, we examine the effects of normalization on specific pairwise comparisons between games:

This chart shows voter preference between any pair of games (#7 The Dome and #8 Chapel & Catacombs by default) with enough voting data (at least 100 voters who have played both).

Dark-brown and dark-blue bars show the actual, unweighted number of voters in each preference category preferring the first game or second game, respectively. Light-orange and light-blue bars show those numbers weighted to normalize the preference distribution. The re-weighting preserves the ratio of choices in each preference category, but increases or decreases the count in each category to match the global preference breakdown shown previously.

For example, if we inspect the default comparison of The Dome with Chapel & Catacombs:

- In general, horror affinity correlates with support for C&C (blue bars get bigger going to the right).

- In general, normalization increases horror-averse vote weight (bright bars are larger than dark on the left, the opposite on the right).

- Specifically, only 3 voters with a “Strong dislike” for horror ranked both games; all 3 prefer The Dome.

- Reweighting inflates those 3 votes to 18.8, keeping them all in support of The Dome. (Note the concern about magnifying noise!)

- By comparison, 85 voters with a “Strong like” for horror ranked both games; 27 prefer The Dome and 58 prefer C&C.

- Reweighting deflates those 85 votes to 63.4, with 20.1 supporting The Dome and 43.3 supporting C&C.

- Overall, the actual fraction of voters preferring The Dome was 51.1%. With reweighting, it is 57.0%.

Overall, preference normalization MOSTLY decreases support for actively scary games, which is consistent with the bias hypothesis.

VERY IMPORTANT: Weighted data is NOISY. Do NOT conclude ANYTHING about any one game from this analysis!

For example, if you select #15 The Hairdressing ☀️vs #62 The House 😱, voters with a “Strong dislike” for horror prefer The House, which is surprising as it is much scarier. However, that statistic is based on ONE voter whose opinion is weighted 9x. That voter has their reasons, but anyone who played The House (La Casa) would agree it is not recommended for horror averse players in general!

To avoid being confused by amplified outlier noise, normalized data should ONLY be used to understand general trends.

Summary statistics for pairwise vote ratio changes by horror level

To study trends in vote ratio changes after normalization, we summarize results for all pairs with at least 100 voters:

This chart shows the difference between normalized and actual voter preference for all pairs of top-100 games with at least 100 voters and differing horror levels, grouped by the games’ horror levels. Positive differences mean normalization supports the less-scary game, negative differences mean normalization supports the more-scary game.

Circles show individual pairwise comparisons; box plot markers show percentiles as above. Hover for details (desktop only).

This summary view shows that normalization shifts support away from actively scary games compared to others. For example, non-horror games ☀️ gain an average of 4.2% compared to actively scary games 😱. (The effect is much less pronounced for comparisons between intermediate horror levels.)

Importantly, normalization also adds substantial noise, as seen in the wide spread of ratio changes for actively scary game comparisons.

Relationship between vote ratios and TERPECA ranking

Then, we investigate how a change in vote ratio (such as the 4.2% above) might affect actual TERPECA ranking:

This chart shows the same pairs of games as above with actual (not normalized) direct vote ratio and the difference in official TERPECA rank. The dashed white line is a LOESS curve fit to the points. Use the mouse to zoom and pan, or hover for details (desktop only).

For example, above we studied the #7 The Dome versus #8 Chapel & Catacombs, with an actual vote ratio of 51% and a rank difference of 1. That places its data point at x=1 and y=51%, close to the midpoint of the graph (zoom in to find it).

The full TERPECA algorithm uses many such pairwise comparisons in a complex way to compute final score and ranking, so the points are scattered, but ranking distance is clearly well correlated with direct pairwise results. We can estimate that for closely ranked games, each rank change correlates with ~1% change in vote ratio.

So, the 4.2% vote ratio change for actively scary games after normalization could indicate a drop of ~4 rank positions for those games.

IMPORTANT: This is VERY APPROXIMATE and not predictive of where any particular game would land after a win/loss change!

Running the full ranking algorithm with normalization

Next, we run the full TERPECA ranking algorithm after normalizing votes by horror preference. There are many caveats here (pairs with incomplete preference representation, vote weighting approach, etc) but it can cross check the correlation analysis above.

This chart shows the direct impact of horror preference normalization on ranking. Each circle is one game; the X axis is the current official rank and the Y axis is the rank change fom normalization. Points are color coded by horror level. Hover for details (desktop only).

For example, #1 Magnifico’s Circus remains in the top spot after normalization, so its circle is at X=0, Y=0 at the far left of the chart. #7 The Dome moved up two spots after normalization, so its circle is at X=7, Y=-2.

Noise is very evident as rooms of all types move up and down by large amounts. However, actively scary rooms (red circles) do seem to be generally demoted in results (lower in the chart) after normalization.

IMPORTANT: These results are VERY NOISY and ABSOLUTELY NOT indicative that any one game is “underrated” or “overrated”. Running the full algorithm with normalization requires normalizing every pairwise comparison, even those with very few votes, so this analysis is even noisier than the vote ratio analysis above. This data must ONLY be used to understand general trends.

Summary statistics for ranking changes by horror level

To study trends in ranking changes after normalization, we summarize the changes for games of each horror level:

This chart shows the difference between normalized and original rank for the top 100 games. Negative differences mean the game was promoted (lower-numbered rank), positive differences mean the game was demoted (higher-numbered rank). Circles show individual games; box plot markers show percentiles as before. Hover for details (desktop only).

This summary view shows that, amid the noise, normalization demoted actively scary games by a median of 4 points; other games were promoted by a median of 1-3 points. This is directionally consistent with the horror bias hypothesis, and numerically consistent with the vote ratio studies above.

Conclusions and recommendations

To summarize the data analysis

- Actively scary games have ~4% less vote share after normalizing by horror preference, corresponding to ~4 rank places.

- Similarly, actively scary games are demoted by ~4 rank places when running the full algorithm with normalization.

- Only ACTIVELY scary games show this effect. Spooky and passively scary games show little or no change.

- There are VERY MANY assumptions and approximations in this analysis.

Therefore, in a hypothetical world where every voter played and ranked every finalist, actively scary games COULD move an average of APPROXIMATELY 4 places lower in the TERPECA results.

However, directly correcting this effect without introducing more problems would be challenging:

- Normalization adds significant noise to individual game rankings, with a rank-change interquartile range (IQR) of ~6 positions. Scrambling game rankings by 6 places or more would greatly degrade TERPECA’s quality!

- Normalization over-amplifies the voices of those few horror-averse voters who do choose to play horror games.

- Using stated preference (or inferred preference) in ranking would create difficult conflicting incentives for strategic voting.

- Using horror level in ranking would create other conflicting incentives for categorization.

- Finally, “all voters are forced to play and rank every finalist” may not be an ideal target. If voters are forced to play games they don’t enjoy, would they rank based on their experience, their estimation of the craft, or their recommendations for a fan of the genre?

In conclusion, this analysis does indicate a modest bias favoring actively scary games in TERPECA results. However, it seems difficult to directly correct this bias in a fair and consistent way without adding ways to game the system or degrading the quality of results.

Therefore, we recommend accepting this level of bias and uncertainty in TERPECA results, and we do not recommend taking algorithmic action to correct this effect. However, we do recommend emphasizing the difficulty of comparing actively scary horror experiences with other games, encouraging players to always take their own preferences into account, and continuing to clearly label horror games.

Official decision

As of Oct 8, 2025, the TERPECA board reviewed this report and accepted its recommendations: to avoid algorithmic normalization for now, to continue labeling horror games, and to recommend informed choice by players as always.

This decision is subject to revision based on further analysis and/or changes in the world of escape games; input is always welcome.

References

Survey weighting techniques

- Sage Encyclopedia of Research Methods: Weighting

- Pew Research: For Weighting Online Opt-In Samples, What Matters Most?

- Survey Methods Journal: Special issue on Weighting

- SurveyMonkey: A guide to weighting surveys for market research

- Survey Practice Journal: Post-stratification or non-response adjustment?

Similar issues in other fields

- intfiction.org: Growing number of comp entries and reviewer selection bias

- The Atlantic: And The Oscar Goes To… Something The Voters Didn’t Watch

- Vox: The Grammy voting process is completely ridiculous

- PNAS: Reviewer bias in single- versus double-blind peer review

- PACMCHCI: Prior and Prejudice: The Novice Reviewers’ Bias against Resubmissions in Conference Peer Review